Hashing is a critical technique in computer science used to efficiently retrieve or store data. At its core, hashing converts an input (or ‘key’) into a fixed-size string of bytes, typically for indexing data in hash tables. This method is widely used in scenarios where fast data retrieval is important, such as databases, file systems, and cryptographic applications.

In this article, we will explore how hashing works, its applications, types of hash functions, and common issues like collisions and how to handle them.

Table of Contents

Hashing

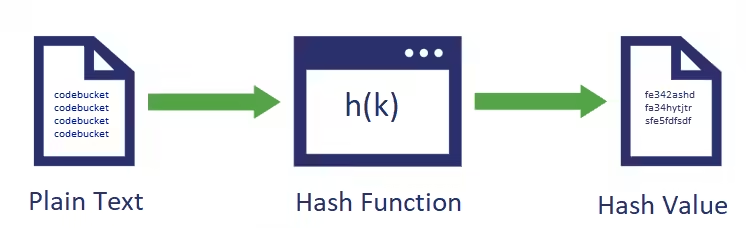

A hash function is a mathematical function that converts an arbitrary size of data into a fixed-sized value. This process is called hashing, and the fixed-size value is called the hash value.

Hash Function

A hash function is a mathematical function that maps input data to a numerical hash value. An ideal hash function should meet the following requirements:

- Deterministic: The same input should always produce the same hash value.

- Collision resistance: It should be difficult to find two different messages, m1 and m2, such that hash(m1) = hash(m2)

- Preimage Resistance: Given a hash value h, it should be hard to find any message m such that h = hash(m) i.e. one-way function. It should be hard to deduce the original text from the hash values.

- Second Preimage Resistance: Given an input m1, it should be difficult to find another input m2 such that m1 ≠ m2 and hash(m1)=hash(m2).

- Avalanche effect: Even a slight change in the input should have a broader effect on the hash value.

Example: SHA256

H(codebucket) = f50880adda4fb9b8f8940b0583af135aa245407aa73047596cc7e77149c5cc6c

H(Codebucket) = 3a6b3ccc63b5024ce4effc574eee4058ea9271c82e64a36e34ba14103c38af61Note:

- At first glance, second preimage resistance and collision resistance can seem quite similar because they both deal with finding different inputs that produce the same hash value.

- In the second preimage, You’re given one specific input m1, and the challenge is to find a different input m2 that produces the same hash as m1.

- In the collision, we are free to choose any two inputs m1 and m2 that produce the same hash.

- Both deal with finding inputs that produce the same hash value, but the starting conditions are different. In the second preimage resistance, you’re bound to a specific input, while in collision resistance, you have more freedom to find any pair of inputs.

- A second preimage is also a collision, but second preimages are supposed to be substantially harder. If the hash function has an output of n bits, then the cost of finding a collision is 2n/2, while the cost of finding a second-preimage is 2n (i.e. a lot more).

Use of hash function

- Data integrity check:

- Bypassing the entire input data file into the hash function to generate the hash value(checksums). Even with the slight modification of input, checksums will change. This ensures the integrity of data.

- By comparing these checksums, we can ensure the integrity of data or any digital assets(like software, video, image etc.)

- Password Storage

- Instead of storing passwords in plain text, mostly all the logon processes store the hash values of passwords in the file. Nobody can guess what the actual password is, by referring to the hash.

- Data Retrieval

- When we want to retrieve one record from many in a file, searching the file for the required record takes time and varies with the number of records. If we can generate a hash for the record’s key, we can use that hash value as the “address” of the record and move directly to it; this takes the same time regardless of the number of records in the file.

Popular hash functions

- SHA Secure Hash Algorithm: SHA1, SHA2, SHA3

- MD Message Digest: MD2,MD4,MD4

Simple Hash Function

It’s just simple hash, not to be used for practical purpose

def simple_hash(s):

hash_value = 0

for i, char in enumerate(s):

# Convert character to integer (ASCII), multiply by a prime, and add

hash_value += (i + 1) * ord(char)

hash_value %= 100000 # Keep it in a fixed range

return hash_value

'''

print(simple_hash("hello")) # Output: 1617 (varies by design)

print(simple_hash("world")) # Output: 1615

print(simple_hash("hell")) # Output: 1062

'''- ord(char) gives the ASCII value of the character.

- (i + 1) * ord(char) mixes position + character.

- % 100000 keeps the hash within 5-digit range (simulate “fixed size”).

- Output is an integer hash.

Hash Table (Hash Map)

A hash table (also called a hash map) is a data structure that stores key-value pairs and allows very fast access to data — usually in constant time: O(1).

If we want to store contact details like names and phone numbers, the first idea that might come to mind is using an array. However, searching in an array takes O(n) time, meaning as the number of contacts grows, the search becomes slower. You could consider binary search, which improves search time to O(log n), but that requires the array to be sorted. Keeping the data sorted introduces overhead, making insertions and updates more complex.

What’s the next option? A balanced binary search tree (BST) ensures all operations—searching, inserting, and deleting—are done in O(log n) time. While this is a significant improvement, we might wonder if there’s a more efficient solution.

You might then think of using a Direct Access Table, where the phone number serves as the index, like

array[984456236] = 'Anil'. This would give O(1) time complexity for all operations, as we can directly access any contact. However, this method has a serious downside—space inefficiency. If phone numbers are large, you’d need an enormous amount of memory. For example, for phone numbers with 10 digits, the array would require O(10^n) space, which is impractical.

That’s where hash table comes in.

Hashing provides a highly efficient solution, offering O(1) average time complexity for operations like search, insert, and delete. In the worst case, it could be O(n), but such scenarios are rare if the hash function is well-designed. Hashing converts data into a smaller, fixed-size format, and this “hash” is used as an index in a hash table. This method strikes a good balance between time and space efficiency, making it ideal for storing large sets of contact details.

Definition

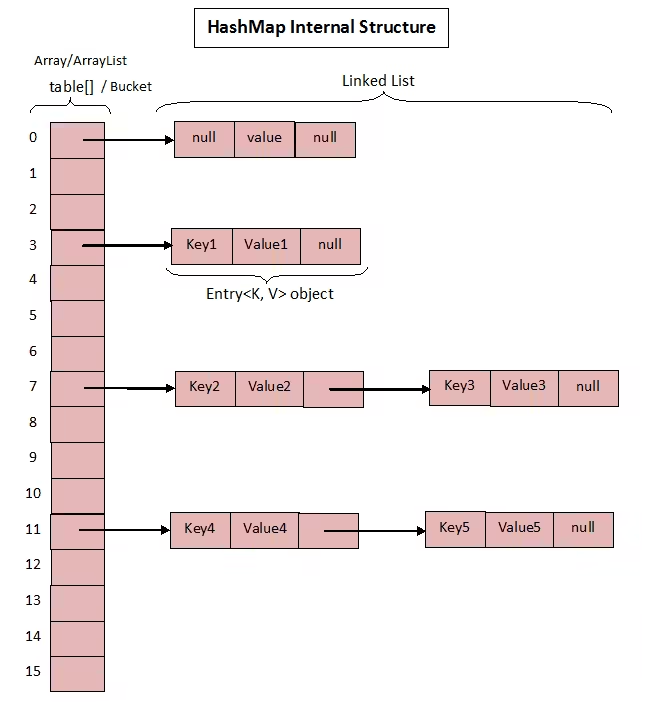

- A hash table (or hash map) is a data structure that implements an associative array abstract data type, a structure that can map keys to values.

- A hash table is a data structure that is used to store keys/value pairs. It uses a hash function to compute an index into an array in which an element will be inserted or searched.

- Hash tables are widely used because they offer efficient insertion, deletion, and lookup operations, typically achieving constant time complexity, O(1), under ideal conditions.

Relation between hash and hash table

A hash table uses that hash to decide where to store or find the value in an internal array.

Simple Hash Table

We’ll use:

- A Python list as the underlying array

- Each index of the list will hold a bucket (a list of key-value pairs)

class HashTable:

def __init__(self, size=10):

self.size = size

self.table = [[] for _ in range(self.size)] # Create empty buckets

def _hash(self, key):

# Very simple hash function: sum of character codes % table size

return sum(ord(c) for c in str(key)) % self.size

def put(self, key, value):

index = self._hash(key)

bucket = self.table[index]

# Check if key already exists, update it

for i, (k, v) in enumerate(bucket):

if k == key:

bucket[i] = (key, value)

return

# Otherwise, insert new key-value pair

bucket.append((key, value))

def get(self, key):

index = self._hash(key)

bucket = self.table[index]

for (k, v) in bucket:

if k == key:

return v

return None # Not found

def __str__(self):

return str(self.table)

ht = HashTable()

ht.put("apple", 100)

ht.put("banana", 200)

ht.put("orange", 150)

print(ht.get("banana")) # Output: 200

print(ht.get("grape")) # Output: None (not found)

print(ht) # See internal table

'''

[[('apple', 100)], [], [], [], [], [], [('orange', 150)], [], [], [('banana', 200)]]

'''Collision Handling Techniques

Collisions occur when two different keys generate the same hash code, which leads to both keys trying to occupy the same bucket. Common techniques for handling collisions include:

- Chaining: Each bucket in the hash table contains a list or chain of key-value pairs that hash to the same index. When a collision occurs, the key-value pair is simply appended to the list at that index.

- Open Addressing: Instead of storing multiple elements in the same bucket, open addressing probes the table to find another open bucket. Various probing techniques are used:

- Linear Probing: Increments the index sequentially (i.e., checking the next bucket) until an open bucket is found.

- Quadratic Probing: Uses a quadratic function to find the next available bucket.

- Double Hashing: A second hash function is applied to find an alternate bucket.

Time Complexity

- Insertion: In an ideal case (with a good hash function and minimal collisions), inserting an element takes O(1) time. In a worst-case scenario, such as when many collisions occur, the time complexity can degrade to O(n), where n is the number of elements in the table.

- Search: The average time complexity for searching an element is O(1). However, if collisions are not well managed, it can become O(n) in the worst case.

- Deletion: Like insertion and search, deletion in a hash table usually takes O(1) time, assuming efficient collision handling.

Applications of Hash Tables

Hash tables are incredibly versatile and are used in many real-world applications, such as:

- Databases: Used to index records for fast data retrieval.

- Caching: Hash tables are used to implement caches where quick lookups are essential.

- Dictionaries: Implementing dynamic associative arrays, like Python’s

dictor JavaScript’sObject. - Symbol Tables: In compilers and interpreters, hash tables store variable names (symbols) and their associated values.

Python’s Dictionary as a Hash Table

Python’s built-in dict type is a highly optimized hash table implementation. Python automatically handles resizing and uses open addressing with a form of quadratic probing for collision resolution. Additionally, Python dictionaries maintain insertion order since Python 3.7, making them predictable when iterated.

# Inserting into a hash table

hash_map = {'name': 'Alice', 'age': 30, 'city': 'New York'}

# Accessing values

print(hash_map['name']) # Output: Alice

# Inserting new key-value pair

hash_map['job'] = 'Engineer'Hash Map vs. Hash Table

Though often used interchangeably, there is a subtle difference between a hash map and a hash table:

- Hash Table: Traditionally, a hash table is implemented as a fixed-size array where each element is called a bucket or slot. Each bucket stores key-value pairs. The hash table uses a hash function to calculate an index from the key, determining where the corresponding value will be stored. Classic hash table implementations do not necessarily handle concurrency.

- Hash Map: A modern version of a hash table that often resizes dynamically, allowing it to efficiently handle more keys. In many programming languages, hash maps provide synchronization or concurrency controls, like thread safety (e.g.,

ConcurrentHashMapin Java).

For the purpose of this article, the terms hash map and hash table will be used interchangeably since the underlying principles are quite similar.

Conclusion

Hash tables are an essential data structure for efficient data storage and retrieval, especially in scenarios where performance is critical. By understanding the mechanics of hash functions, collision handling, and other underlying concepts, developers can optimize the use of hash tables in their applications.