Dynamic Programming (DP) is an optimisation technique that solves complex problems by breaking them down into smaller, overlapping subproblems and storing their results to avoid redundant calculations.

Table of Contents

About DP

- Solver smaller sub-problems

- Stored the results of smaller problems

- Reuse the stored result to solve the actual problem

Why do we even need dynamic programming?

Before DP, let’s understand the problem DP is solving. While solving a problem, we often end up solving the same smaller problems again and again. This causes exponential time and wasted effort.

This leads to:

- Huge time complexity (exponential)

- Slow or impossible solutions for large inputs

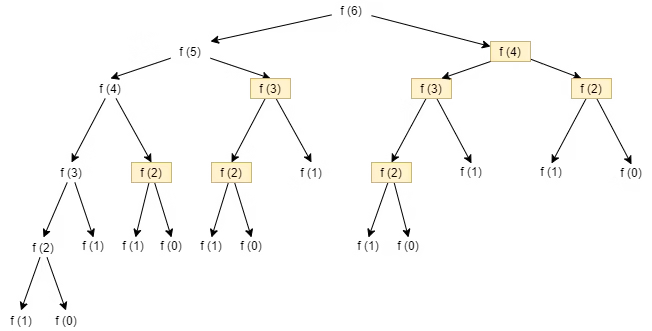

Example: Fibonacci

To compute for fib(6) , fib(4), fib(3), fib(2) and fib(1) are computed multiple times

| Fibonacci call | Times computed |

|---|---|

fib(4) | 2 times |

fib(3) | 3 times |

fib(2) | 5 times |

fib(1) | 3 times |

Time complexity becomes O(2ⁿ)

DP fixes this by:

- Solving each subproblem once

- Storing the result

- Reusing it whenever needed

What Is Dynamic Programming?

Dynamic Programming is an optimisation technique where we store the results of subproblems to avoid recomputation.

Formally:

DP is a technique to solve problems with overlapping subproblems and optimal substructure by storing intermediate results.

DP is not a new algorithm — it’s recursion + memory

Enhanced Recursion

Dynamic Programming is optimised recursion where overlapping subproblems are solved once and reused.

What recursion alone does

- Explores all choices

- Repeats the same subproblems again and again

- Thinks in a top-down way

Example

fib(5)

├─ fib(4)

│ ├─ fib(3)

│ │ ├─ fib(2)

│ │ └─ fib(1)

│ └─ fib(2)

└─ fib(3)

├─ fib(2)

└─ fib(1)Same calls → wasted work

What DP enhances in recursion

DP does three upgrades:

- Memoisation (memory): If I’ve solved it once, don’t solve it again.

- Store the result of (state)

- Reuse it next time

DP is a technique that converts an exponential recursive solution into an efficient one by caching results of overlapping subproblems and solving them in a structured order.

Exponential → PolynomialThe Two Fundamental Properties of DP

Overlapping Subproblems

The same subproblem appears multiple times.

Example

- Fibonacci

- Climbing stairs

- Coin change

- Knapsack

If there is no repetition, DP is useless.

Optimal Substructure

An optimal solution of the problem can be built from optimal solutions of subproblems.

i.e My best answer depends on the best answers of smaller versions of the same problem.

Example

- Shortest path

- Maximum profit

- Minimum coins

If an optimal substructure does not exist, DP fails.

Two Ways to Do Dynamic Programming

Top-Down DP (Memoisation)

- Start from the main problem

- Go downward (recursion)

- Store results

Bottom-Up DP (Tabulation)

- Start from the smallest subproblem

- Build upwards

- No recursion

- Iterative approach

We say DP is about recursion, but in the bottom-up approach, there are no recursive calls. Why is it still called DP?

- Bottom-Up DP removes recursion from the code, not from the logic.

- DP is not about recursion in code — it’s about recursion in logic.

- Every Bottom-Up DP has a hidden recursion. Take an example

fib(n) = fib(n-1) + fib(n-2)

dp[0] = 0

dp[1] = 1

for i in range(2, n+1):

dp[i] = dp[i-1] + dp[i-2]

- Think of Bottom-Up as recursion flattened

fib(0) → fib(1) → fib(2) → fib(3) → fib(4) → fib(5)- Bottom-Up DP is just Top-Down DP, except that the call stack is replaced with loops.

How to guess it’s a DP problem?

Step 1: Can the problem be broken into smaller, similar subproblems?

If you can say: To solve for n, I need answers for n-1, n-2, …

Examples:

- Fibonacci

- Climbing stairs

- Knapsack

- LIS, LCS

👉 This is an optimal substructure.

Step 2: Are you asked to find min/max/count / best way?

Examples:

- maximum profit

- minimum cost

- number of ways

- longest / shortest

👉 DP loves optimisation & counting

3. You have choices at every step that affect future results?

Keywords:

- choose/pick/skip

- take / not take

- buy/sell / cooldown

- include/exclude

👉 Choices = branching → DP.

Final intuition

Don’t guess, DP. You eliminate everything else:

- Too big for brute force ❌

- Greedy fails ❌

- Same subproblem repeats ✅ → DP

One-line DP Detector

If the problem asks for an optimal answer over choices, and the same subproblem can appear again → DP

How to Think in DP?

After identification of the problem as dp problem. Ask these exact questions.

Step 1: What is the state?

What information uniquely defines a subproblem?

- Each independent variable that changes in recursion becomes a DP state dimension.

- A DP state is defined by the minimum set of changing variables needed to uniquely identify a subproblem.

- Based on different variables:

- Number of states =1, we call it 1d dp

- Number of states =2. We call it 2d dp

Example:

- Fibonacci → n

- Knapsack → (index, capacity)

- LCS → (i, j)

Step 2: What is the transition?

- How does the current state depend on smaller states?

- If I am in this state, what are my options, and where do they take me?

Template

dp[state] = best(

dp[next_state_1],

dp[next_state_2],

...

)Example: Fibonacci

Transition moves:

- n → n-1

- n → n-2

dp[n] = nth Fibonacci number

dp[n] = dp[n-1] + dp[n-2]Example House Robber (1D DP)

Choices at the house i

- Skip house i

- Rob house i

dp[i] = max money from houses [0..i]

dp[i] = max(

dp[i-1], # skip

dp[i-2] + nums[i] # rob

)Example Coin Change (Unbounded Knapsack)

Choices

- Skip coin i

- Use coin i again

dp[i][amount] = number of ways using coins[0..i]

dp[i][amount] =

dp[i-1][amount] # skip

+ dp[i][amount - coin[i]] # take againStep 3: What are the base cases/stopping criteria?

Smallest valid inputs.

dp[0] = 0

dp[1] = 1Step 4: What is the final answer?

Which DP state do we return?

The Practical Rule

While learning DP, always follow:

Recursion

↓

Memoization (Top-Down DP)

↓

Tabulation (Bottom-Up DP)

↓

Space Optimization (if possible)- This order matches how your brain understands the problem → how the computer optimises it.

- Top-down matches the natural flow of the problem.

Why 4 approaches to the same problem

| Step | What YOU learn | What COMPUTER gains |

|---|---|---|

| Recursion | Problem structure | Nothing |

| Memoization | Overlapping states | Speed |

| Tabulation | Execution order | Stack-free DP |

| Space Opt | State dependency | Memory efficiency |

- Recursion teaches thinking.

- Memoisation proves DP.

- Tabulation engineers it.

- Space optimisation polishes it.

Tabulation looks easier?

This is valid confusion. Let’s dip dive

- Tabulation is easier to EXECUTE

- Recursion is easier to THINK

- If you already understand the problem → Tabulation is fine

- If the problem is new/tricky/unfamiliar → Start with Recursion

Common Dp pattern

0/1 Knapsack(6 Variation)

Core idea: take or skip (once)

- Subset Sum

- Equal Sum Partition

- Count of Subsets with Given Sum

- Minimum Subset Sum Difference

- Target Sum

- No. of subsets of given difference

Pattern: dp[i][w] → decision-based DP

Unbounded Knapsack — 4 Variations

Core idea: can take an item an unlimited number of times

- Coin Change – Min Coins

- Coin Change – Number of Ways

- Rod Cutting

- Integer Break

Pattern: dp[w] or dp[i][w] with same index reuse

Fibonacci Family — 7 Variations

Core idea: dp[i] depends on the last few states

- Fibonacci Number

- Climbing Stairs

- Min Cost Climbing Stairs

- House Robber

- House Robber II

- Decode Ways

- Tribonacci

Pattern: dp[i] = f(dp[i-1], dp[i-2])

LCS Family — 15 Variations

Core idea: two strings, match or skip

- Longest Common Subsequence

- Longest Common Substring

- Shortest Common Supersequence

- Edit Distance

- Delete Operation for Two Strings

- Insert/Delete to Convert String

- Longest Palindromic Subsequence

- Min Deletions to Make Palindrome

- Distinct Subsequences

- Count Palindromic Subsequences

- Wildcard Matching

- Regex Matching

- Sequence Pattern Matching

- Minimum Insertions to Make a Palindrome

- Print LCS

Pattern: dp[i][j] (string DP backbone)

LIS Family — 10 Variations

Core idea: increasing order + history

- Longest Increasing Subsequence

- Maximum Sum Increasing Subsequence

- Longest Decreasing Subsequence

- Longest Bitonic Subsequence

- Number of LIS

- Russian Doll Envelopes

- Longest Chain of Pairs

- Box Stacking

- Increasing Triplet

- LIS with Difference Constraint

Pattern: dp[i] = best ending at i

Kadane’s Algorithm — 6 Variations

Core idea: local vs global optimum

- Maximum Subarray Sum

- Maximum Circular Subarray

- Maximum Product Subarray

- Longest Subarray with Positive Product

- Maximum Sum Rectangle (2D Kadane)

- Stock Buy & Sell (Single Transaction)

Pattern: reset vs extend

Matrix Chain Multiplication — 7 Variations

Core idea: partition DP

- Matrix Chain Multiplication

- Palindrome Partitioning

- Boolean Parenthesization

- Burst Balloons

- Scramble String

- Egg Dropping

- Min Cost to Cut Stick

Pattern: dp[i][j] = min/max over k

DP on Trees — 4 Variations

Core idea: post-order DP

- Diameter of Tree

- Maximum Path Sum

- House Robber III

- Binary Tree Cameras

Pattern: return multiple values from child

DP on Grid — 14 Variations

Core idea: paths + directions

- Unique Paths

- Unique Paths II

- Minimum Path Sum

- Maximum Path Sum

- Dungeon Game

- Cherry Pickup I

- Cherry Pickup II

- Triangle

- Falling Path Sum

- Falling Path Sum II

- Gold Mine

- Maximal Square

- Count Square Submatrices

- Largest Rectangle in Matrix

Pattern: dp[i][j] from neighbors

Others — 5 Variations

Mixed but important

- Partition Array for Max Sum

- Word Break

- Perfect Squares

- Catalan Numbers

- Dice Roll Ways

Lets Warm-up

Knapsack Family

Example 1: Fibonacci

Example f(6) = f(5) + f(4) so,

f(n) = f(n-1) + f(n-2)

State: we have a dependency on only one variable, n, and it is a 1-D

Transition: we have to travel from n-1 and n-2 to reach n

Base Cases / Stopping Criteria

- dp[0] = 0

- dp[1] = 1

Resursion

def fib(n):

if n <= 1:

return n

return fib(n-1) + fib(n-2)Problem ❌

- Same subproblems repeated

- fib(5) recomputes fib(3) multiple times

- Time: O(2^n)

- This is a decision tree explosion

👉 Recursion gives correct logic, but wastes work

Memoisation (Top-Down DP)

def fib(n, dp):

if n <= 1:

return n

if dp[n] != -1:

return dp[n]

dp[n] = fib(n-1, dp) + fib(n-2, dp)

return dp[n]

#Example

n = 10

dp = [-1] * (n+1)

print(fib(n, dp))

Tabulation (Bottom-Up DP)

def fib(n):

if n <= 1:

return n

dp = [0] * (n+1)

dp[1] = 1

for i in range(2, n+1):

dp[i] = dp[i-1] + dp[i-2]

return dp[n]Space Optimization

- To compute dp[i], we only need dp[i-1] and dp[i-2]

- ❌ We don’t need the full array.

- Replace the DP table with variables

def fib(n):

if n <= 1:

return n

prev2 = 0 # fib(0)

prev1 = 1 # fib(1)

for i in range(2, n+1):

curr = prev1 + prev2

prev2 = prev1

prev1 = curr

return prev1Example 2 Climbing Stairs

Recursion

def climb(n):

if n <= 1:

return 1

return climb(n-1) + climb(n-2) Memoization

def climb(n, memo={}):

if n <= 1:

return 1

if n in memo:

return memo[n]

memo[n] = climb(n-1, memo) + climb(n-2, memo)

return memo[n]

Tabulation

def climb(n):

dp = [0]*(n+1)

dp[0] = dp[1] = 1

for i in range(2, n+1):

dp[i] = dp[i-1] + dp[i-2]

return dp[n]Space Optimization

def climb(n):

a, b = 1, 1

for _ in range(2, n+1):

a, b = b, a+b

return b

Example 3 House Robber

Recursion

def rob(i, nums):

if i >= len(nums):

return 0

take = nums[i] + rob(i+2, nums)

skip = rob(i+1, nums)

return max(take, skip)

Memoization

def rob(i, nums, memo={}):

if i >= len(nums):

return 0

if i in memo:

return memo[i]

memo[i] = max(

nums[i] + rob(i+2, nums, memo),

rob(i+1, nums, memo)

)

return memo[i]Tabulation

def rob(nums):

n = len(nums)

dp = [0]*(n+2)

for i in range(n-1, -1, -1):

dp[i] = max(nums[i] + dp[i+2], dp[i+1])

return dp[0]

def rob():

length = len(nums)

if length==1:

return nums[0]

dp = [0]*length

dp[0] = nums[0]

dp[1] = max(nums[0],nums[1])

for i in range(2,length):

dp[i] = max(dp[i-2]+nums[i],dp[i-1])

return dp[length-1]Space Optimization

find out the code for this Why does the house robber problem feel different from the Fibonacci or the climbing Stairs problem?

- Fibonacci & Climbing Stairs

- : No decision, No Choice

- You must take both paths

- You are counting all possibilities

- House Robber = a decision problem

- You can skip

- Or you can take

- But you cannot take both

So, House Robber is of Knapsack-type DP, while Fibonacci and Climbing are not Knapsack-type.

LCS

Problem to practice

https://www.geeksforgeeks.org/competitive-programming/dynamic-programming

See and remove

Put all 100 list question maps

https://docs.google.com/document/d/1Xn6jyVEuxg_ZqoTSGwvRoYa4g4v6BQbwd1JcAX403Sg/edit?tab=t.0

https://chatgpt.com/g/g-p-696d0a98c504819189a251a99c68858a-dp/c/698ad4c1-1d88-8320-b807-0a4fe142fab8

DP

├── Counting

├── Knapsack / Decision

├── State Machine

├── Interval

└── Tree / Graph

Bitset Knapsack

dp on broken profile

Digit Dp